Starting with Reinforcement Learning

Finally getting there to build our "money-printing machine"? Hmmm not yet. But let's get started at least.

The last few months, I fully focused on Algorithmic trading with Reinforcement Learning and have summarised most papers I have read in this post.

Now I can start writing about my observations and how my experiments will look like.

Okay… let’s start.

Markov Decision Process Formalisation

Markov Decision Process is also known by other names as sequential stochastic optimization, discrete-time stochastic control and stochastic programming - studies sequential optimization of discrete-time stochastic systems. The basic object is a discrete-time stochastic system whose transition mechanism can be controlled over time. Each control policy defines the stochastic process and values of objective functions associated with this process. The goal is to select a ‘good’ control policy.

In real life, decisions of humans have two types of impact; they cost or save time, money or resources, or they bring revenues, as well as, they have an impact on the future, by influencing the dynamics. In many situations, decisions with the largest immediate profit may not be good for the future. MDPs model this paradigm and provide results on the structure and existence of good policies and on methods for their calculation. MDP has gained the attention of researchers since they solve a large variety of real life problems.

A Markov Decision Process is defined as the following objects;

A State Space X;

An Action Space A;

Set A(x) of available actions at states x ∈ X

Transition probabilities, denoted by p(Y|x,a)

Reward function r(x,a) denoting the one-step reward using action a in state x

Formulating to: There is a stochastic system with state space X. When the system is at state x ∈ X, a decision-maker/agent selects an action a from an action set A(x) available at state x. After an action a is being done at state x, the system moves to the next state x with the probability of p(. | x,a) and the decision-maker collects a one-step reward r(x,a). The selection of action a is may depend on the current state of the system, the current time, and the available information about the history of the system. At each step, the decision maker may select a particular action or, in a more general way, a probability distribution on the set of available actions A(x). Decisions of the first type are called nonrandomized and decisions of the second type are called randomised.

An MDP is called finite if the state and action sets are finite. We say that a set is discrete if it is finite our countable.

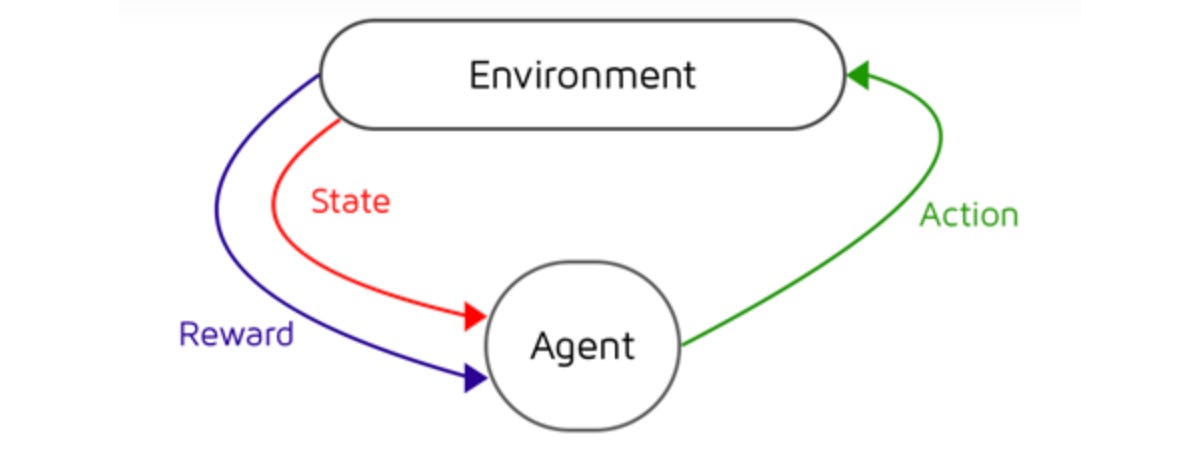

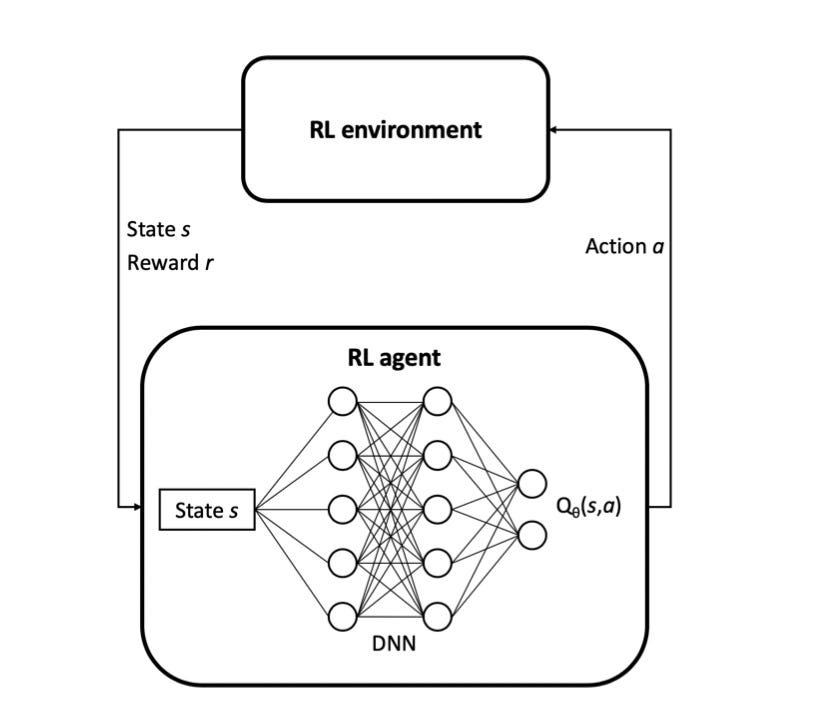

Reinforcement learning can be regarded as a process an agent learns to self-adjust policies by successively interacting with the unknown environment. The unknown environment is often formalised as MDP. The definition assumes that the markov property holds in the environment, which means the transition to the next state s(t+1) is only conditional on the current state s(t) and action a(t). However, it’s not reasonable that agents can access full states of the environment in the real world environment, which means markov property rarely holds. A more universal method, partially observable markov decision process (POMDP), can capture the dynamics of many real world environments by explicitly acknowledging that the agent catches a partial glimpse of the current state. The difference more specifically is that the agent receives an observation o ∈ Omega instead of the true state s. The observation o is generated from the current system state according to a probability distribution O(s) = P(o|s).

We can formulate the trading problem as a Markov Decision Process where an agent interacts with the environment at discrete time steps. At each time step t, the agent receives some representation of the environment at discrete time steps.

In the literature, many different features have been used to represent state spaces. Among these features, a security’s past price is alway included and the related technical indicators are often used. Possible state spaces are past prices, return over different horizons (past month, 2 months, 3 month and 1 year periods) and technical indicators including Moving Average Convergence Divergence and Relative Strength index as well as the turbulence (indicate if the market is in some extreme conditions) index.

Another input are Private variables which are the remaining trading cash and the sharpe ratio of the previous state.

The RSI is an oscillating indicator moving between 0 and 100. It indicates the oversold (a reading below 20) or overbought (above 80) conditions of an asset by measuring the magnitude of recent price changes. One may include this indicator with a look back window of 30 days in our state representations.

To sum up, according to information from most papers gathered, the state space includes the following inputs:

Price Data

Technical Indicators

Risk Metric (Sharpe/Sortino Ratio)

Number of Shares owned

Available Cash

My personal ideas additionally to these would be:

Portfolio value if everything would be sold immediately

Last 5/10 trading positions and their amounts.

Both Stock Market and Crypto Market trading volume

The Optimisation problem: ML/RL tasks are solving optimisation problems. Starting by focusing only on single asset trading optimisation with paying attention to risk. The risk of a strategy is generally measured by using the Sharpe ratio or Sortino ratio, which take into account a risk-free investment (e.g., U.S. treasury bond or cash), but even though we can acknowledge the significance of these measures, they do have some drawbacks. We can differ between maximising the expected discounted sum of rewards of daily returns or the sharpe ratio over an infinite time horizon.

Action Space In most of the literature work, the action space is a simple set of action {-1,0,1} where each value represents the position directly, i.e. -1 corresponds to a maximally short position, 0 to no holdings and 1 to a maximally long position. This representation of action space is also known as target orders where a trade position is the output instead of the trading decisions. However, the real trading environment is more complex. Considering this, one may aim to work with a position-embedded action space such that with the maximum position n, the action space is extended to {-n,-n+1,...,0,...,n-1,n} which represents the position held in the next state.

Reward Function a wide variety of research is applying the utility function to be the immediate profit itself (subtracting the cost and slippage rate) representing a risk-insensitive trader.

The reward fed to the RL agent is completely governing the behaviour of the strategy, so a wise choice of the reward shaping function is critical for good performance. There are quite a number of rewards one can choose from or combine, from risk-based measures to profitability or cumulative return, number of trades per interval, etc. The RL framework accepts any sort of reward: the denser, the better. For example in one research the authors test seven different reward functions based on various measures of risk or PnL (profit and loss).

Performance Comparison

Several metrics are available to evaluate the trading performance such as

Maximum drawdown: The maximum percentage loss during the trading period

Annual volatility: The annual standard deviation of the port- folio return.

Sharpe ratio: The annualised portfolio return in excess of the risk-free rate per unit of annualised volatility

Calmar Ratio:The average portfolio return per unit of maximum drawdown

Average Correlation Coefficient (single-step): It measures a model’s single-step prediction capability.

Average Correlation Coefficient (multi-step): It measures a model’s multi-step prediction capability. Setting 𝑊 = 20 in (24).

Annual return: the geometric average portfolio return each year.

Questions:

What do we want to teach the agent? Learning to reduce risk as much as possible or Profitability?

Where to find the data? How to know if data is qualitative or not?

Ernest Chan says we should learn the profitability of profiting and then focus on the strategy which makes sense, so should the agent learn regime changes and be on hold while these happen, if so, how to teach it?

Please write your comments and ideas HERE to me as i’m very open to anyone and even am happy about your feedback :)

More interested? —> Read: Reinforcement learning in financial markets - a survey by Fischer, Thomas G.

Next week/month → get into Deep Reinforcement Learning → understand it in-depth and then begin with our programming.

Resources:

https://www.researchgate.net/publication/230887886_Handbook_of_Markov_Decision_Processes_Methods_and_Applications

https://ieeexplore.ieee.org/document/8786132

https://ideas.repec.org/p/zbw/iwqwdp/122018.html